A Spotlight on A.I. on Devices

A look at A.I. running on devices nearest the data

Origins

This week we take a look at A.I. running on devices nearest the data. Fudge Sunday inspiration for this week comes from a recent comment at M.G. Siegler’s 500ish.com:

My working theory is that “the cloud” will have some advantages and then there will be the “on device” advantages heavily marketed by the same Apple which has been angling ever closer to making supercomputers that fit in your lap and in your hand. So, even if you are “doing email” there will be abilities for user opt-in beneficial machine learning (A.I.) on your activities that provide recommendations, improvements, and surfacing knowledge of the past that are exclusively “on device” capabilities. Said another way, Spotlight is ripe for being put in… the spotlight.

Source: Apple’s Literal “Scary Fast” Event on 500ish by M.G. Siegler

In related inspiration, if you aren’t already reading Matt Baker’s newsletter or following his updates on LinkedIn, here are a few recent gems:

[…] it will run in the cloud, in the datacenter, at the edge, and on your workstations and notebooks

Source: LinkedIn

[…] the author massively optimized the model adaptation process. Performing fine-tuning on a 7 billion parameter LLM on a SINGLE rather small GPU with only 16GB of memory.

Source: LinkedIn

Devices Doing Deep Data Duties

Okay, sounds great, right? More powerful mobile devices means more ways to see A.I. appear in our pockets!

Oh wait. What about my device battery life?

🤖 + 📱 = 🪫 😭

Device battery life is a reasonable concern. However, there are also a growing number of examples where groups of research and development minded folks are assuming devices will be doing more data intenstive operations in the near future while simultaneously contemplating (avoiding?) battery anxiety in addition to overall power requirements.

- Enhance energy efficiency of AI models on mobile devices

- Democratizing on-device generative AI with sub-10 billion parameter models

- Image generation with shortest path diffusion

- Adding Low-Power AI/ML Inference to Edge Devices

- tinyML PdM & Anomaly Detection Forum

- tinyML Sponsors

Expect a growing list of A.I. initatives focused on determinstic power, weight, cooling, and geometry. I shoud make that an acronym and tag past issues accordingly. DPWCG perhaps?

Also, mark your calendars. As we approach May 2024, analyst coverage of on device A.I. will reach a natural one (1) year looking back moment where much content and digital ink will be spilled.

After all, there is that whole peak of expectations thing to consider, right? 🤓

Shot and Chaser

And now it’s time for a couple of recent shot and chaser moments.

Shot

Readers might recall my prior coverage of OpenTelemetry (OTel) last year.

Please Please OTel Me Now (2022)

Chaser

Well, along comes Keep!

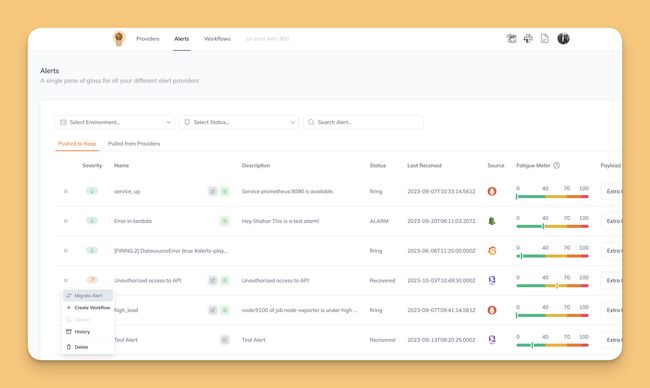

Keep is an open-source alert management and automation tool that provides everything you need to create and manage alerts effectively.

Source: Keep

Source: Keep

Shot

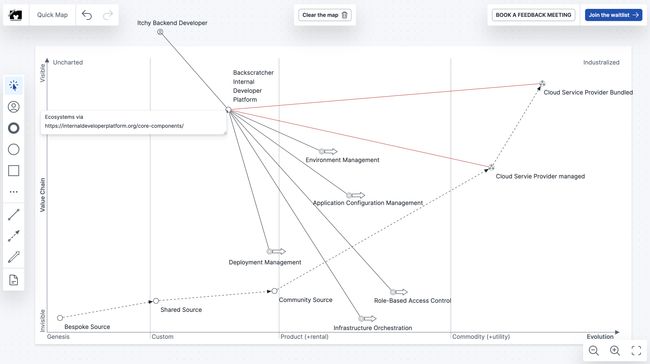

Back in Feb 2023, I wrote about Wardley maps and tried an early access service at mapkeep.com which provides an online tool for strategy mapping.

Source: Map of the Platformatique (2023)

Chaser

Through the wonders of the Fediverse, Matthew Broberg pinged me on Mastodon and I was pulled into a quick discussion with the https://mapkeep.com creator, Tristan Slominski — who is also on Mastodon.

Attached: 1 image @jay@cuthrell.com I always check to see if previous maps survive translation to new interface… works for this one 😀"

Source: https://mastodon.social/@tristanls/111359626043341826

As it turns out, if you want to create your maps you can now join the waitlist! 🗺️🤓

Reader Feedback

I received clever alternative expansions of the L.L.M. acronym in response to last week’s issue Large Language Marmalade (2023)

- Large Language Marmite 🍯🤣 clever!

- Large Language Molasses 🍯🤣 clever!

- Large Language Mustard 🍯🤣 clever!